Common people getting hacked

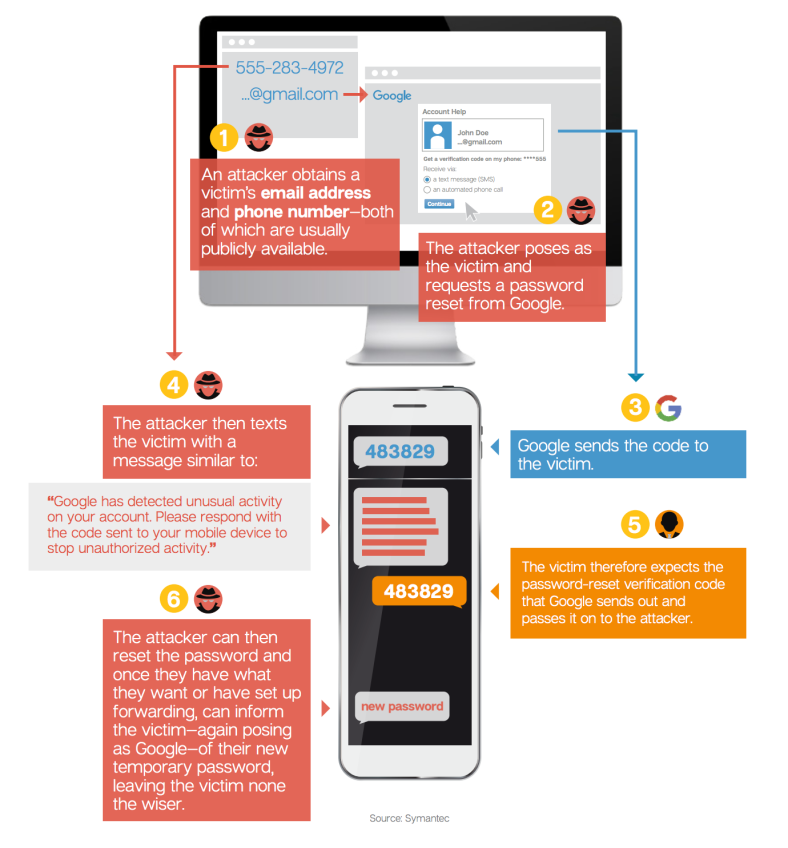

You may be familiar with the famous tale of Mat Honan being hacked through a series of vulnerabilities from his daisy-chained Apple, Amazon and Google accounts. None of the individual systems were vulnerable to conventional hacking, but the hackers exploited the customer service of password recovery from all 3 services to get into his accounts. Symantec helps explain these kind of social engineering exploits in their 2016 Internet Security Threat Reports;

These real life examples demonstrate that security is increasingly complex and multifactorial, and as Gabi Siboni said;

“The multiplicity of threats in cyberspace and the ability of attackers to detect weaknesses and use them in operations requires a holistic view of organisational security.”

In the last 5 years, we have seen Big Data Analytics (BDA) employed effectively to better monitor threats, malware and intrusion events across various systems and heterogeneous data sources. We have also seen more specialised tools carrying out various functions in the total solution landscape.

But what we now have to progress further into is the employment of Artificial Intelligence (AI) tools to help improve our detection and response to these threats from the large BDA platforms. In the ISSA Journal, Keith Moore writes (1);

“Artificial Intelligence (AI), the paradigm shift that will revolutionise the cybersecurity industry. It is capable of acting as a human analyst, but tirelessly at machine speed.”

He goes on to say; “The use of domain generation algorithms (DGAs) and polymorphism make malware much more difficult to detect and have led to 78 percent of security analysts no longer trusting the efficacy of antivirus tools.” I will add to these technical reasons for more sophisticated and harder to detect threats, that the scope has increased as well, with the increased volume and exposure of users, reflecting the new shift to more digital lifestyles and services. Users often consume applications and data across a myriad of distributed heterogeneous repositories, making the challenge of protecting them even more difficult. Threat mitigation now requires speed and accuracy beyond the capabilities of human agents.

Detecting & responding to threats at the speed of AI

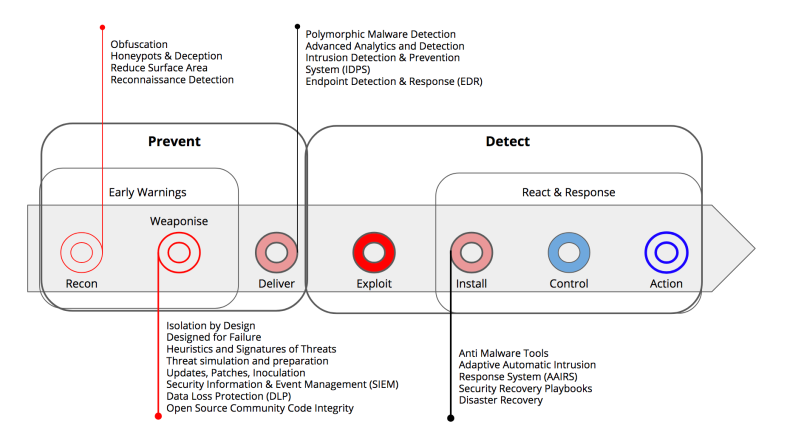

Using the cyber kill chain model by Wirkuttis and Klein (2), the goal of most cyber security is to prevent the exploitation of vulnerabilities in the network and systems being protected and always working to eliminate all possibilities of exploitation for threats that may have eluded detection. While there are specialised systems today that can detect a threat upon delivery, AI can significantly move the battle upstream, from detection to prevention. AI can even be used to predict possible exploits and vulnerabilities and work on mitigating any possibility of delivery. It can even be trained to spot attempts at reconnaissance and work on tracing the sleuth.

AI will be able to employ Deep Learning and Deep Neural Networks (DNN) to threats from known threat models and past examples, and spot anomalies by modelling normal from observation over time. Supervised learning and training can help minimise noise from false positives and ensure missed positives are iterated into the model.

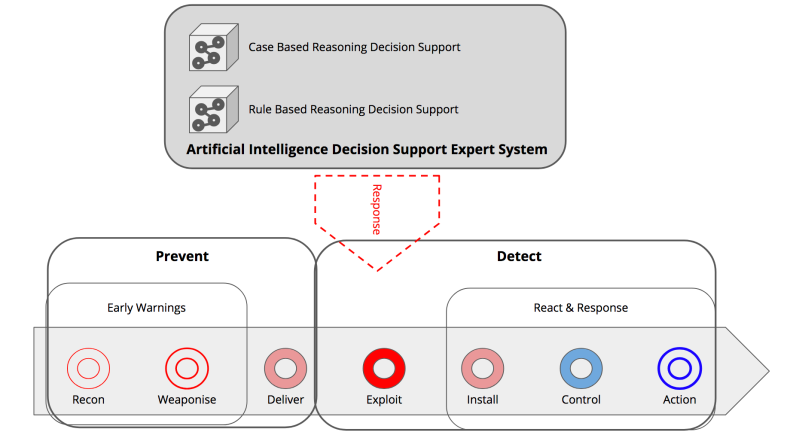

Furthermore, AI will be able to provide unparalleled decision support and insights in the event of an attack to the human first responders. Because the AI Decision Support Expert Systems will be equipped with an understanding of a large compendium of previous attacks and threat playbooks from various sources with Case Based Reasoning algorithms that will assist in adapting the best strategy for mitigation. This is complemented with Rule Based Reasoning decision support, which when combined best model human cognition, with the added benefit of processing at the speed and throughput of computers. Once these models are mature, responses can be automated as well.

Furthermore, AI will be able to provide unparalleled decision support and insights in the event of an attack to the human first responders. Because the AI Decision Support Expert Systems will be equipped with an understanding of a large compendium of previous attacks and threat playbooks from various sources with Case Based Reasoning algorithms that will assist in adapting the best strategy for mitigation. This is complemented with Rule Based Reasoning decision support, which when combined best model human cognition, with the added benefit of processing at the speed and throughput of computers. Once these models are mature, responses can be automated as well.

AI to assist and monitor the human agents

AI to assist and monitor the human agents

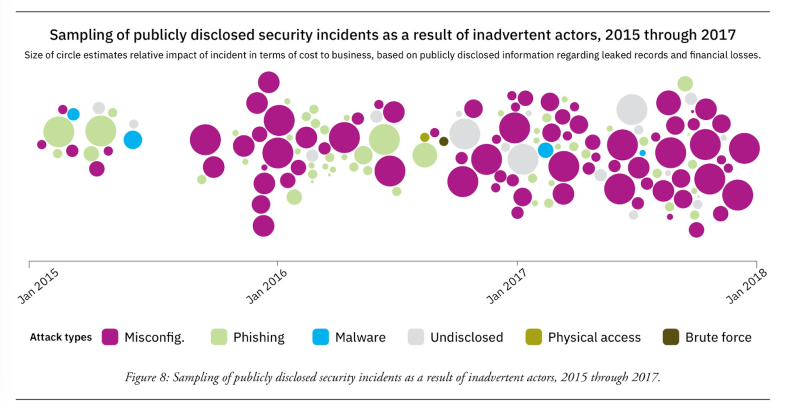

Another application for AI systems is in finding vulnerabilities and assisting human agents in correctly configuring their security infrastructure. Many vulnerabilities come from the complex cascading of systems; sometimes the user is the only interface between two systems as shown in the example in the beginning. AI decision support tools could be employed to find threats and then convey them with recommendations to human agents and users, through self help and education applications. The IBM X-Force report below demonstrates how much of security incidents are caused by human error, this would be something AI decision support and education tools could mitigate.

AI concierge for my passwords

We are also now beginning to see password management solutions sold to consumers, while Federated Identity Management and Single Sign On Services gain popularity in the enterprise. If we enhance these solutions with AI for Prevention and Detection, we could significantly improve how we monitor and educate users to keep them safe and even predict malicious agents before they can act. Data Leak Prevention can be taken to the next level if AI could automatically track and classify data, and understand the context of how the user should be using it. Policies could be monitored and managed automatically. Of course this does not have to feel like ‘big brother’, the AI could be given a friendly interface like an AI digital assistant or a chatbot and be valued by users as a helper and concierge. AI through IoT , biometrics and computer vision could automatically recognise users and federate the access and privilege they need.

Footnotes

(1) Artificial Intelligence in Cybersecurity, by Nadine Wirkuttis and Hadas Klein – Cyber, Intelligence, and Security Journal, Volume 1, No. 1, January 2017

(2) “The Race against Cyber Crime Is Lost without Artificial Intelligence” by Keith Moore in ISSA Journal Volume 14, No. 11, November 16